Trust needs to live somewhere

If the content is synthetic, what do we assume about the product?

Lately I have been seeing a particular kind of enthusiasm show up in marketing conversations. A company announces it has replaced hundreds of thousands of dollars in content spend with AI agents. Ten agents, or twenty, working around the clock, creating variations, repurposing content, niching, translating, localizing. A 24-hour content engine pushing thousands of posts a day. It’s cool and it smells like progress. But what rarely gets asked is a simpler question:

If the content is synthetic, what do we assume about the product?

I work for a company whose core is heavily centered on machine learning, so this is not a moral objection to AI in communication; it is a perceptual one. Humans have always made unconscious judgments about credibility based on the appearance of effort. Not effort as pure aesthetics, but effort constrained by reality, time, cost, risk, and tradeoffs. Historically, production value functioned as a costly signal. Not because expensive meant true, but because expense implied commitment. You could fake sincerity, but you could not fake effort cheaply, and that friction filtered out a lot of nonsense.

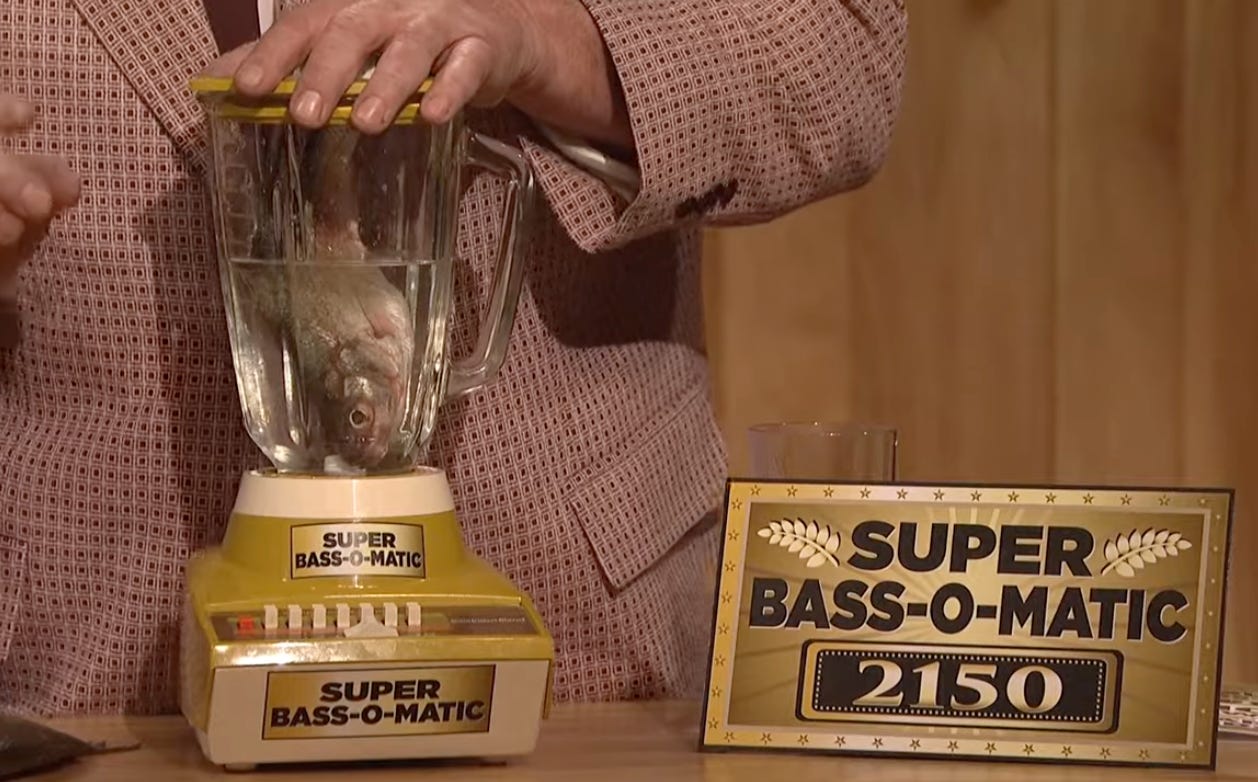

In the early days of syndicated television advertising, audiences learned very quickly to sense mismatches. When the promise outpaced the perceived investment, it registered as off. Those commercials did not just fail, they became jokes. Recurring fake commercial sketches on SNL were built around this exact tension: overconfident claims delivered with just enough polish to be aired, but not enough reality behind them to be believed. People laughed because they instantly recognized the gap; they always have.

Today, when someone profiles the value of the content engine that never sleeps, what I hear is a voice that is everywhere, and when a voice is everywhere, responsibility becomes difficult to locate. What does that do for a brand? I suppose this is fine in some categories, where authenticity is not the product and consumers are content to assume the product itself is disposable, although I am not entirely sure where that boundary sits.

The line becomes clear when trust is cumulative, as in healthcare, finance, infrastructure, education, or anything involving risk, judgment, or accountability. Here, communication is not just marketing; it is a proxy for responsibility. When something goes wrong, someone has to answer for it, and people sense whether that accountability exists long before it is tested.

In this context something like an explainer video, well-crafted with AI, feels pretty acceptable. They are instrumental, they clarify, they orient and get out of thew way. As a product company, we are grateful that we do not need to spend hundreds of thousands of dollars here and can instead put that investment into product quality and support.

But volumized hype content does the opposite. It asks for belief without proportional investment or risk. That has never worked particularly well in the past, and there is little reason to believe it will start working now. The deeper point is that authenticity does not need to mean human made; we should absolutely use the tools available to us. But authenticity does require obvious effort, specifically effort constrained by reality. Constraints leave fingerprints, and those fingerprints cognitively signal responsibility to an audience.

In medical technology, responsibility is far more than a brand position; it is operational. We are audited on it. At Sparrow, we invested in it long before we had a product, through quality systems, documentation, traceability, and review. Good science, done properly, with real people standing behind it. That posture cannot be bolted on later, and I am not sure it can be convincingly simulated at scale.

I am glad we do not need to spend huge sums on explainer videos just to look legitimate. Looking good should always be in service of getting it right, not the other way around. The mistake is believing that the pursuit of frictionless communication comes without loss. When claims are easy to generate and endlessly repeated, accountability becomes harder to locate. In domains where failure has consequences, people instinctively look for signs that someone is prepared to stand behind what is being said.

Trust needs to live somewhere.

Mark, "constraints leave fingerprints" is the key insight here. When effort signals disappear, so does locatable accountability.

But what if the constraints shift rather than vanish? At Karora, our AI agents don't generate content—they process satellite imagery and sensor data so a 3-person team can deliver enterprise-grade carbon verification. The trust signal becomes "we can show you the data chain and the math" rather than "we made this by hand."

You're right that trust needs to live somewhere. I'd argue it can live in architecture—if accountability is built in from the ground up.