Screening, AI, and the Persistence of Uncertainty

Collecting the data is hard but the real obstacle is knowing what the data means

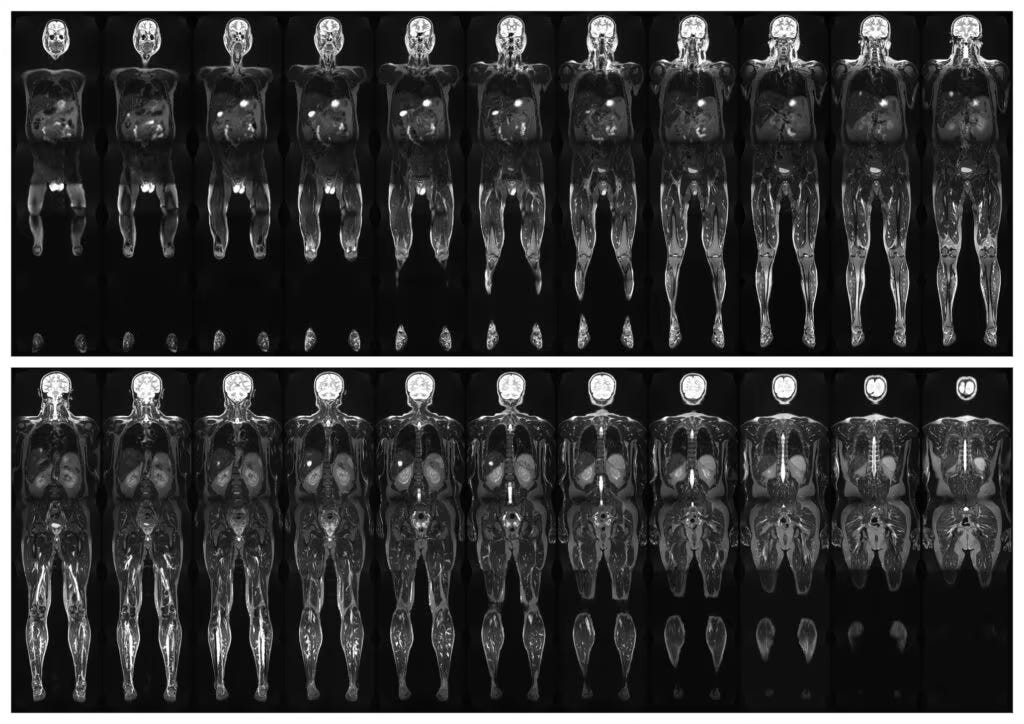

There is a growing belief that medicine is on the verge of a major breakthrough: combine full body MRI scans, blood biomarkers, genomics, wearables, and artificial intelligence, and disease will become visible long before symptoms appear. Cancer, aneurysms, neurodegeneration, cardiovascular disease, all quietly discovered early by systems smart enough to separate dangerous signals from harmless biological noise.

It is an appealing vision and parts of it are likely directionally correct; AI absolutely belongs in the future of medicine. But there is a difference between “AI will help” and “AI will solve this very soon,” and the gap between those two statements is enormous.

The misunderstanding starts with a basic assumption most people have about medicine: if we can look harder and gather more data, outcomes must improve. Unfortunately, biology is not that cooperative because human bodies are full of irregularities, scars, cysts, nodules, asymmetries, inflammatory changes, and anatomical quirks; by middle age, almost everyone has “something.”

MRI scanners are very good at finding abnormalities, but the harder problem is determining which abnormalities matter. That distinction is where the optimism around full body scanning often collapses.

A full body MRI may identify a small pancreatic cyst, thyroid nodule, adrenal lesion, brain spot, liver irregularity, or vascular anomaly. Some of these findings are important, many are not, and some would never progress into meaningful disease during a person’s lifetime. Others are technically real but untreatable. Once discovered, however, they rarely disappear psychologically because they trigger follow up imaging, biopsies, specialist referrals, repeat scans, and anxiety; sometimes surgery follows, and sometimes complications emerge from procedures that were never necessary in the first place.

This is not a fringe problem. It is a mathematical one.

People hear phrases like “95% accurate” and imagine certainty, but screening in low prevalence populations behaves very differently because even highly accurate tests produce large numbers of false alarms when disease is relatively uncommon.

Suppose a disease affects 0.5% of asymptomatic adults and imagine an AI model with excellent performance:

90% sensitivity

98% specificity

Those numbers sound extraordinary, yet in 100,000 people:

about 500 truly have disease

the model correctly identifies roughly 450

but it also falsely flags about 2,000 healthy people

So, the system produces about 2,450 positive results, but only 450 are true positives. Even with excellent performance, most positive results are still false alarms.

AI does not repeal Bayes’ theorem no matter how many GPUs are involved.

Now extend that problem across dozens of diseases instead of one. A full body screening platform is not trying to detect a single condition; it is attempting to identify cancers, vascular disease, neurological disease, inflammatory conditions, organ abnormalities, and structural irregularities simultaneously. Each additional target introduces new false positives, new ambiguities, and new follow up pathways.

If a platform screened healthy people across 50 disease categories using models that were each 98% specific, roughly 64% of healthy people would still be falsely flagged for at least one disease, while about 27% would be flagged for two or more. In other words, even technically impressive systems can create a world where almost every healthy person would leave with a growing collection of uncertain medical findings. This is essentially the current full body scan problem now wearing an AI hat.

At this point many people make a perfectly reasonable argument: “I still want to know.”

That instinct should not be dismissed because high-agency people in particular tend to prefer uncomfortable truth over passive ignorance; many would gladly accept some false positives in exchange for reducing the chance of catastrophic surprise (I know I would). The idea sounds rational because people imagine the downside as a quick follow up scan and a temporary scare.

Medicine often does not resolve ambiguity that cleanly. What begins as “probably nothing” can evolve into years of serial imaging, specialist referrals, contradictory opinions, biopsies, procedural risks, and indefinite surveillance because the real burden is not usually one false alarm; it is entry into a long chain of unresolved probabilities.

People may think they are buying knowledge, but they are often buying membership in a very expensive uncertainty club. The deeper issue is that modern imaging increasingly creates a third category between healthy and diseased: “abnormal, uncertain, probably benign, monitor indefinitely.” And that category is vastly larger than most people realize. A person may believe they are comfortable with false positives, but what they often mean is they are comfortable with one clarified false alarm; they are not imagining a suspicious lesion followed every six months for eight years while various experts estimate the malignancy risk somewhere between “unlikely” and “we should keep an eye on it.”

The common response to all of this is predictable: “Fine. Current medicine is noisy. AI will solve that part.” Perhaps partially, but this is where the engineering reality becomes important. Training a large language model and training a generalized disease prediction system are fundamentally different problems because language models learn from vast amounts of loosely labeled human text, while medicine requires ground truth; medical ground truth is slow, expensive, and often ambiguous.

To build a truly useful preventive AI system, researchers would need massive datasets containing imaging, blood biomarkers, genomics, wearable data, pathology results, interventions, and longitudinal outcomes. Collecting the data is hard but the real obstacle is knowing what the data means.

A useful medical label is not:

“Did the scan show an abnormality?”

It is:

“Did this abnormality become clinically important, and would earlier intervention have improved the patient’s life?”

That distinction can take years to resolve because a pancreatic cyst seen today may not reveal its significance for a decade, a tiny brain lesion may never matter, a slow growing cancer may never become symptomatic before the patient dies of something else; time itself becomes part of the dataset.

Even with unlimited GPUs and unlimited money, biology still unfolds at biological speed. The infrastructure requirements for this are staggering; not startup scale staggering, civilization-scale staggering. Imagine attempting to build a platform that meaningfully screens for 50 major diseases. For each disease, researchers would need enough confirmed cases across age groups, sexes, ethnicities, disease stages, scanner variations, medication effects, comorbidities, benign mimics, inflammatory states, and normal aging patterns. Even a simplified version quickly explodes into over a million deeply characterized patients and then comes the real burden: longitudinal follow up. Not weeks. Years.

Patients would need repeat imaging, repeat labs, outcome tracking, pathology linkage, treatment records, and adjudicated endpoints. Hospitals, imaging centres, insurers, regulators, and health systems would all need to cooperate at extraordinary scale because data standardization alone would be a monumental project: Scanner calibration varies, reporting language varies, clinical pathways vary, human follow up varies. And then the system would still need retrospective model development, external validation across new hospitals and populations, then prospective observational deployment, and finally randomized utility studies asking the only question that actually matters:

Did people live longer or better lives because of this system? Not: “Did we find more abnormalities?”

Medicine has repeatedly learned that detecting more disease indicators in the general public does not automatically improve outcomes. Sometimes it simply creates earlier anxiety, unnecessary procedures, and additional cost without changing the final outcome.

Screening is tricky business and an MRI, monster compute capacity, and a mountain of venture capital will not magically overcome that reality. The more likely near-term path is narrower AI applications operating on enriched patient cohorts, creating high pre-diagnosis probability patient flow for diseases where we already have effective therapies and established care pathways.

A murmur with a certain acoustic profile detected by AI in a 65 year old has a reasonably good chance of identifying structural heart disease before that person becomes obviously symptomatic. That is a very different problem than asking a giant multimodal system to infer hundreds of possible diseases simultaneously from a sea of weak signals and incidental findings. In the first case, the AI is helping create a higher probability patient flow inside infrastructure that already exists today; intervention becomes easier for the patient, easier for the physician, and easier for the health system itself.

This is why many physicians remain cautious about broad untargeted scanning, not because they oppose AI or prevention, but because they understand how difficult it is to distinguish meaningful signal from biological noise at population scale.

None of this means the future is hopeless. AI will almost certainly improve interpretation of imaging and biomarkers, dramatically improve targeted screening pathways, and make longitudinal monitoring far more sophisticated. Risk stratification will improve and multimodal analysis will become clinically useful in specific domains; however, there is an important difference between “incrementally improving medicine” and “building a deterministic early disease oracle.” The latter is probably extraordinarily far away.

The deeper issue is philosophical as much as technical because modern culture increasingly treats uncertainty as a solvable engineering failure; if we just gather enough data, deploy enough sensors, and train sufficiently powerful models, ambiguity will disappear. Medicine keeps rediscovering the same lesson: more information is not always more truth.

Sometimes it is simply better illuminated noise.